University of Toronto assistant law Prof. Anthony Niblett knows exactly how to teach students, but what about teaching computers?

“That’s quite different,” said Niblett, who’s part of a U of T team that’s training Watson, IBM’s artificial intelligence technology, to use cognitive reasoning to answer questions about tax law.

For now, Niblett and his team are teaching a Watson-based program called Blue J. Legal to determine whether a worker is an employee or an independent contractor, a question that has important implications for tax law but also in areas like labour, contract, and tort matters.

Blue J. Legal would ask a lawyer some questions about the clients’ work, including their pay structure, where they work, their mobility, the tools they use, and who owns them. It then uses the facts of the case and the thousands of documents available in its system to tell lawyers the likelihood of whether they’re dealing with an employee or a contract worker with evidence to support the answer.

“We’re still learning how to better do it. We are still learning how to train it,” said Niblett at an event organized last week by the Centre for Innovation Law and Policy on cognitive computing and the future legal research.

“Should we be focusing on cases the way we teach students or should we try a different method?” he said during a discussion that highlighted on what’s perhaps a new challenge for legal education.

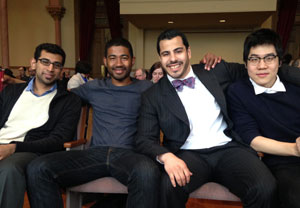

Former computer science students at the University of Toronto are taking another artificial intelligence application, ROSS, to the market after it won second place in a continent-wide competition for programs using IBM’s Watson technology.

Users can ask ROSS a legal question in lay language and in just seconds it will turn billions of documents into snippets of answers that come with a confidence ratio. ROSS will also show users where its answers came from.

The team behind ROSS — Shuai Wang, Jimoh Ovbiagele, Akash Venkat, Pargles Dall’Oglio, and Andrew Arruda — says artificial intelligence is the future of legal research. Although the tool won’t replace lawyers, they say it will help them increase efficiency and eliminate tedious tasks.

But not everyone is quick to endorse artificial intelligence in legal research. Sharon Baker, a librarian at the university’s Bora Laskin Law Library, has doubts about the technology.

“I can see a role for [artificial intelligence] in terms of the facts [of a case] and finding the [applicable] law, but in terms of analysis and the communication of that, I think there still has to be human intelligence and interaction,” Baker said at the event.

With technologies like Watson, which picks up users’ behaviours and preferences to perfect its skills, it’s easy to replicate errors, according to Baker. She noted part of her concern is also that people would start to rely on the confidence rating from artificial intelligence tools instead of doing their own analysis.

But Angus McIntyre, IBM’s Watson development and delivery operations manager in Canada, downplayed the concern. “We find that people who are experts are not interested in the answer; they want to look at the evidence,” he said, adding the technology simply makes professionals better at what they do by very quickly providing them with the most relevant information.

Still, there are cases where Watson simply won’t have an answer to provide, especially in areas that lack case law and are more forward looking than precedent-based. Constitutional law is an example of that, according to Niblett.

Other challenges in teaching Watson include somehow getting it to understand the concept of overruling and the hierarchy of the courts. There’s also the thorny issue of personal bias by the humans who are teaching Watson how to respond to questions.

For more, see "

Get Ross in your pocket."

“That’s quite different,” said Niblett, who’s part of a U of T team that’s training Watson, IBM’s artificial intelligence technology, to use cognitive reasoning to answer questions about tax law.

“That’s quite different,” said Niblett, who’s part of a U of T team that’s training Watson, IBM’s artificial intelligence technology, to use cognitive reasoning to answer questions about tax law.